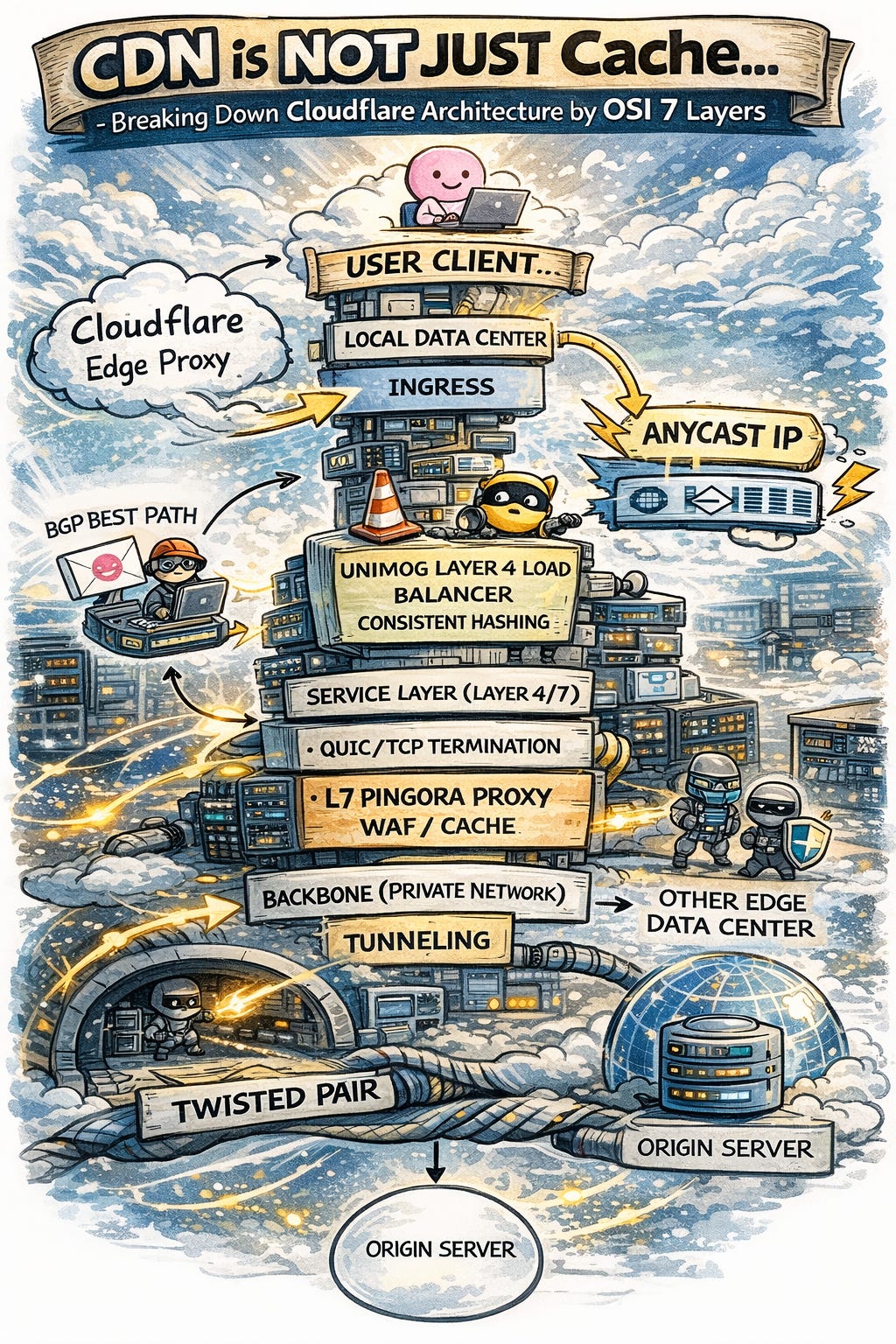

CDN is More Than Just Cache: Breaking Down Cloudflare’s Architecture by OSI 7 Layers

Analysis of the Technology Stack: BGP, Anycast, Unimog, QUIC, and Pingora

Cloudflare, a dominant force in the CDN market, provides far more than simple caching. it integrates WAF, DDoS protection, and even allows engineers to execute JavaScript at the edge via Cloudflare Workers. It has effectively become one of the most critical infrastructures of the modern internet.

What does the architecture of such a vital infrastructure look like? Let’s explore through the following deep dive:

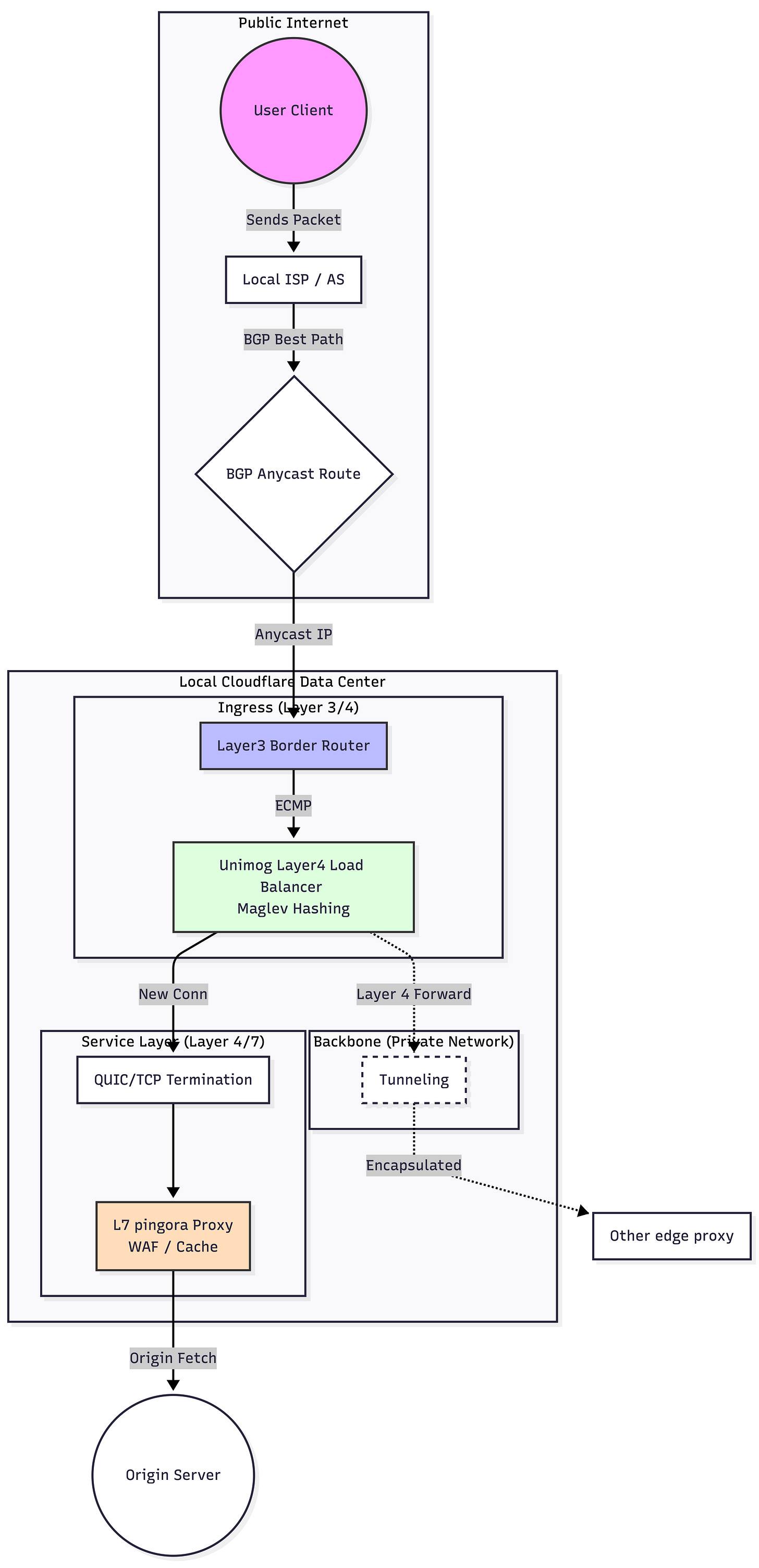

How to Map a Single Domain to Global Edge Proxies?

The primary function of a CDN is to route requests to the nearest edge proxy based on the user's location.

Typically, one domain maps to one public IP. Geo DNS supports mapping the same domain to different public IPs based on geography. During a recursive DNS query, the Geo DNS server identifies the client’s location via their IP and returns the corresponding regional public IP.

However, this method has flaws:

Latency: If the Geo DNS server is in the US, the query might travel a long distance just to find a public IP.

DNS Caching: DNS has TTL (Time to Live) values. If a public IP needs to be replaced due to a failure, it takes time for the change to propagate globally, preventing instant failover.

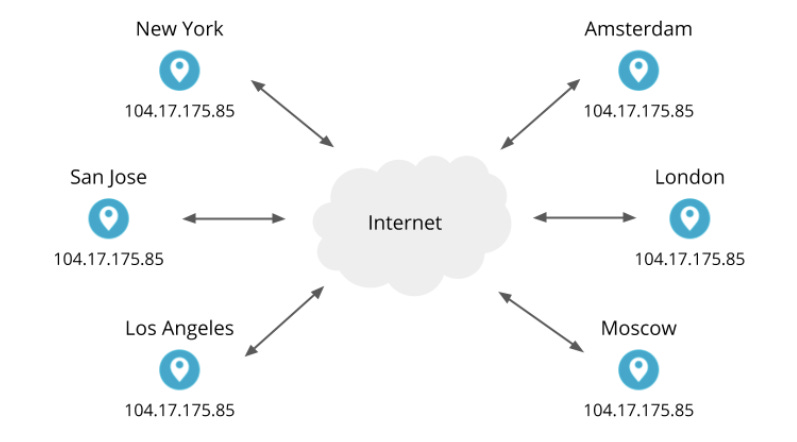

To solve this, Cloudflare uses Anycast technology to map a single public IP to physical machines in multiple locations simultaneously.

image source: https://blog.cloudflare.com/unimog-cloudflares-edge-load-balancer/

How Does Anycast Map One IP to Multiple Locations?

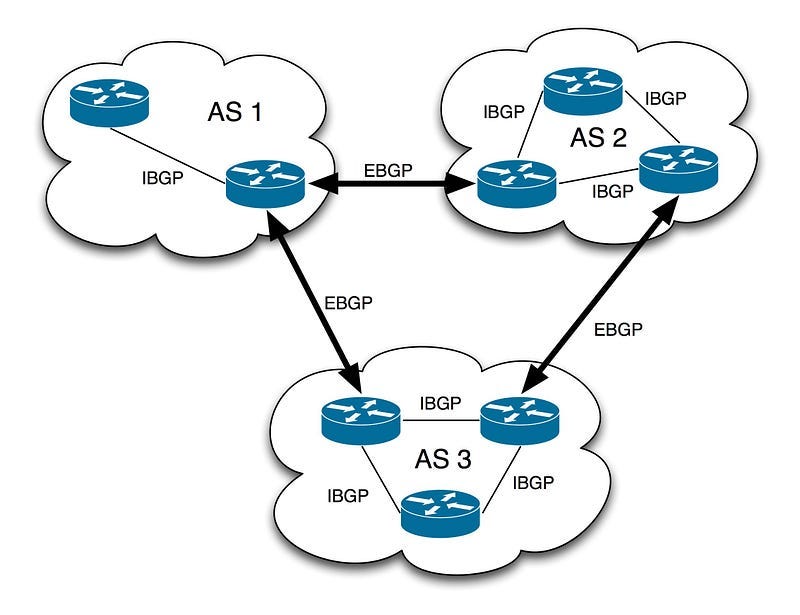

Networks are divided into LANs and WANs. Within a region, an Autonomous System (AS) (e.g., an ISP) manages a specific network range (e.g., 36.224.0.0/13) . When packets enter the WAN to travel between ASs, they use the Border Gateway Protocol (BGP) to exchange routing information (a.k.a, IP Prefixes).

image source: https://www.cloudns.net/blog/understanding-bgp-a-comprehensive-guide-for-beginners/

For example, an ISP in Taiwan uses BGP to announce to an ISP in Japan that it is responsible for a specific IP range (e.g., 36.224.0.0/13). When a Japanese user sends a request to an IP within that range (e.g., 36.224.1.1), the Japanese ISP's routing table directs the packet to Taiwan’s border router. That border router then forwards the request to the final destination server within the local network.

Anycast registers the same IP Prefix with different ASs via multiple border routers. Because different border routers maintain different routing tables, the same public IP will be forwarded to different locations depending on the source AS.

Cloudflare leverages its status as an independent AS to register IP prefixes at the edge of various networks globally. This ensures that a Taiwan-based request reaches a Taiwan edge proxy via a local border router, minimizing latency by keeping traffic local.

BGP is a Layer 3 protocol, meaning forwarding doesn’t require deep packet inspection, leading to high performance and availability. If a Japan data center fails, BGP automatically finds the next best path (e.g., to Taiwan) for that same IP.

Is Anycast + BGP the Perfect Solution?

Not quite. BGP routing isn’t based on geographic distance or RTT (Round Trip Time); it’s based on AS Path cost, which is largely determined by administrative policies.

For example, ASs may charge each other for transit. Network administrators can set the BGP path cost based on peering costs rather than geographic distance. To mitigate this, Cloudflare establishes direct peering with ASs to ensure Local AS Path priority.

Note: You can refer to the detailed BGP routing rules here.

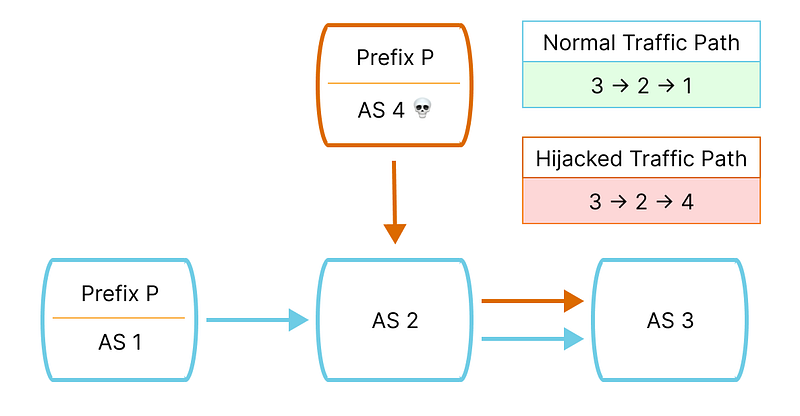

Additionally, BGP suffers from Hijacking. A hacker can announce the same IP Prefix to other ASs with a lower “cost,” diverting legitimate Cloudflare traffic to their own servers.

image source: https://blog.cloudflare.com/bgp-hijack-detection/

The solution is to verify AS identity during registration (similar to HTTPS certificates), though not all ISPs implement this yet. You can use https://isbgpsafeyet.com/ to check whether your ISP has implemented a verification process.

Additionally, the nature of Anycast allows multiple proxy servers in the same region to share the same public IP. This can lead to the following issue: TCP packets belonging to the same connection might be routed to different servers.

Once a client establishes a TCP connection with a server, the server’s kernel stores the TCP connection ID (src_ip, src_port, dest_ip, dest_port) and its corresponding state (such as which packets have been received and the expected sequence number of the next packet) in memory to ensure packet ordering. If a packet from that same TCP connection is sent to a different server that lacks the specific connection state, that server will close the connection.

How to Solve TCP Instability in Anycast

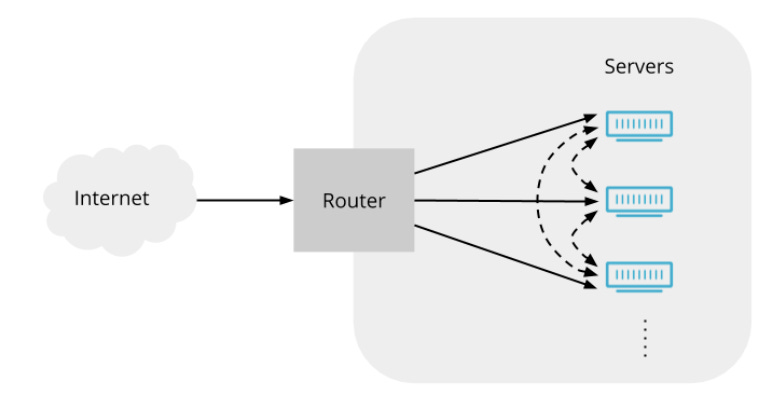

The network path for a packet is:

Client → ISP Gateway → BGP Selection → Border Gateway

Border Gateway → NAT (Public IP to Private IP)

NAT → Private Network Edge Proxy

Layer 2 Gateways use ECMP (Equal-Cost Multi-Path) to hash a connection's "4-tuple" (Source/Dest IP and Port) to ensure packets from the same connection go to the same proxy.

However, when edge proxies are added or removed, the ECMP algorithm becomes inconsistent, and it cannot distribute traffic based on the actual workload of each proxy.

Therefore, Cloudflare developed the Unimog Layer 4 Load Balancer using XDP (eBPF), granting every proxy server the ability to forward packets. eBPF allows the implementation of a control plane within a user-space process, providing high flexibility—for instance, using Meglev hashing and monitoring proxy workload to dynamically adjust routing.

Furthermore, because the proxies themselves possess forwarding capabilities, NAT redirection is no longer required. Instead, the proxy can use IP Tunneling to encapsulate the packet, modifying the destination IP to a private IP before sending it out.

image source: https://blog.cloudflare.com/unimog-cloudflares-edge-load-balancer/

In addition to the Layer 4 Load Balancer, Cloudflare utilizes another key technology at Layer 4: QUIC!

What is QUIC, and What Problems Does it Solve?

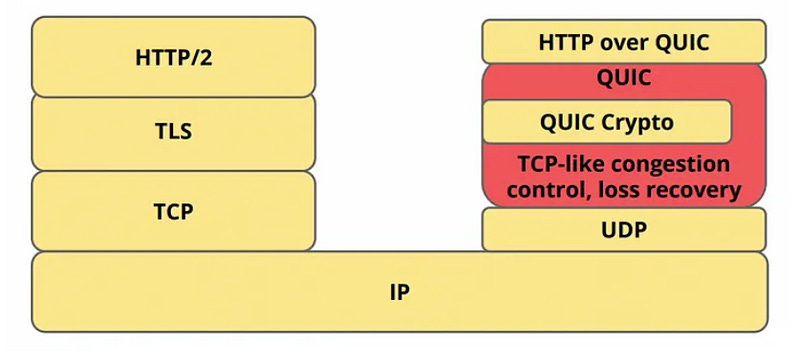

QUIC is a Layer 4 protocol built on UDP, used in HTTP/3 to solve performance bottlenecks in TCP:

Head-of-Line (HoL) Blocking:

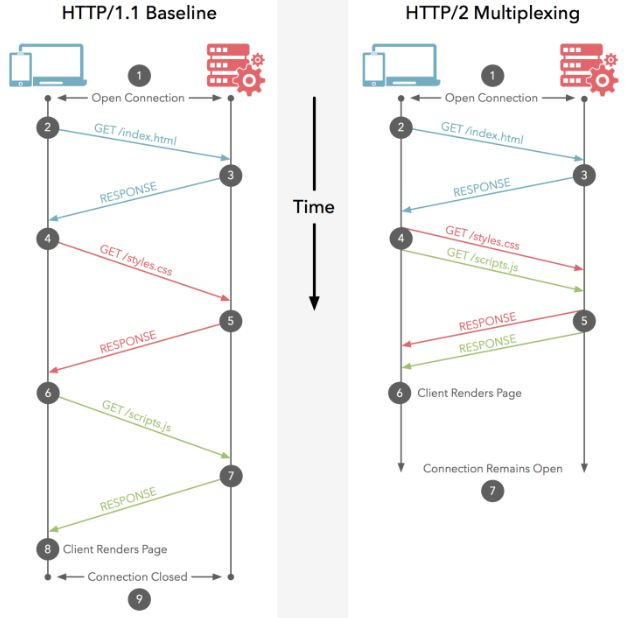

HTTP/2 resolves the Layer 7 Head-of-Line (HoL) Blocking issues found in HTTP/1. It allows multiple requests to be sent simultaneously over a single TCP connection without waiting for the response of the current request. Once the application layer receives the response packets for different requests, it uses stream IDs to reassemble them into the complete payload.

image source: https://juejin.cn/post/6844903984524705800

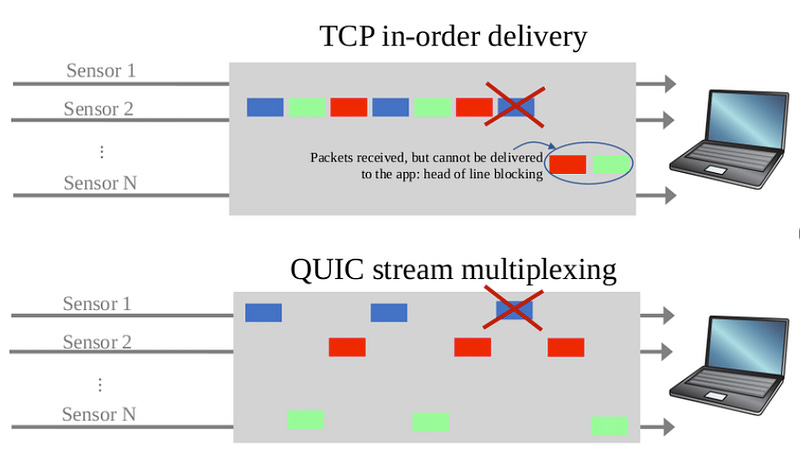

However, Head-of-Line Blocking also exists at Layer 4. From TCP’s perspective, it cannot distinguish between packets belonging to different requests; it treats them as part of the same connection and insists on receiving them in strict order.

If a single packet from “Response A” is lost, the kernel will not deliver the payload of “Response B” to the user-space process—even if all of Response B’s packets have arrived safely—until that missing packet is retransmitted and received.

QUIC’s solution is to move the stream ID logic from Layer 7 down to Layer 4. This ensures that while packets within a single stream must remain ordered, different streams are independent of one another. Furthermore, because HTTP/3 allows the application layer (e.g., the browser) to parse and reassemble payloads, QUIC removes the need for the kernel to maintain packet ordering for multiple streams, thereby reducing the complexity of kernel memory management.

image source: https://www.researchgate.net/figure/The-head-of-line-blocking-problem-and-the-stream-based-solution_fig1_344551663

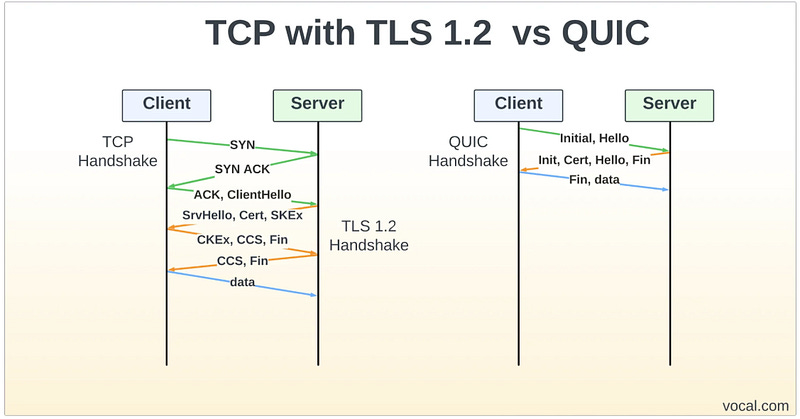

Connection Establishment Requires a Handshake

Before transmitting data, TCP requires a handshake process:

Client

SYN→ ServerServer

SYN-ACK→ ClientClient

ACK→ Server

Only then can data transmission begin.

In contrast, QUIC allows the client to send data along with the very first SYN packet, effectively enabling 0-RTT (Zero Round-Trip Time) data transmission.

However, when TLS is supported, it typically requires 1-RTT. This is achieved, for example, through the Diffie–Hellman algorithm:

Client → sends

Public Key→ Server.Server uses its

Private Key+Client’s Public Keyto calculate a Shared Secret.Server → sends a

Session Ticket(i.e., the encrypted shared secret) +Server’s Public Key→ Client.

Finally, the Client uses its own Private Key + Server’s Public Key to calculate the same Shared Secret. The client then encrypts its request using this secret and sends it to the server along with the session ticket.

image source: https://vocal.com/voip/quic/

Furthermore, session tickets can be stored on the client’s local disk, allowing them to be reused after a disconnection. Upon reconnecting, there is no need to exchange public keys or recalculate a shared secret; data can be sent immediately, achieving true 0-RTT.

However, this poses a risk of Replay Attacks. To mitigate this, the server-side periodically rotates the encryption keys used to generate session tickets, sets a short TTL (Time to Live) for the tickets, and only allows GET requests to utilize 0-RTT.

Beyond performance optimization, QUIC implements its own retransmission and flow control mechanisms. For example, if the server’s memory buffer is full, it will signal the client to stop sending data; similarly, if the RTT (Round-Trip Time) becomes too high, it will instruct the client to pause new transmissions until all missing packets are received.

Traditional TCP lacks the concept of “streams.” For the same packet, even if it is retransmitted, the sequence number remains identical. This leads to ACK ambiguity, where the server cannot distinguish whether an incoming ACK is for the original packet or the retransmitted one, making RTT calculations imprecise.

In contrast, QUIC’s ordering is determined by the stream, and each stream has its own independent sequence. Packet IDs, however, increment globally (across all streams). This allows the system to distinguish whether an ACK is for a retransmission or the original packet, leading to more accurate RTT measurements and more reliable flow control.

Additionally, because QUIC’s retransmission and flow control are implemented in user space (while the kernel simply sees UDP), it offers much greater flexibility. It can be tailored to specific business requirements—for instance, in streaming scenarios where retransmission requirements are lower but strict flow control is essential.

image source: https://datatracker.ietf.org/meeting/98/materials/slides-98-edu-sessf-quic-tutorial-00.pdf

Beyond performance optimization, what other benefits does Cloudflare gain from using QUIC?

Cloudflare implemented QUIC in Rust (via the quiche library) and identified key advantages and challenges during implementation:

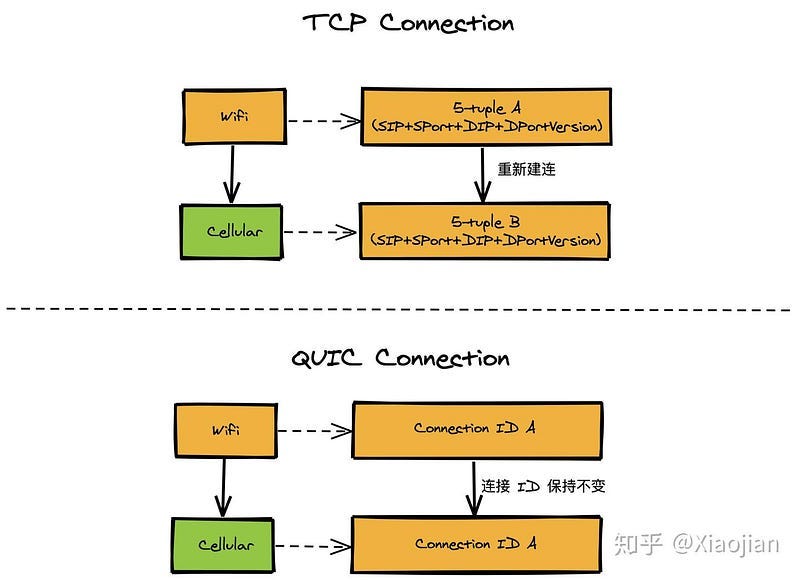

Benefit — Connection Migration

A TCP connection is identified by a 4-tuple: (src_ip, src_port, dest_ip, dest_port). Once a user switches networks and changes their source IP (e.g., moving from 5G to Wi-Fi), the connection must be re-established.

In contrast, QUIC uses a Connection ID (CID), which is a random number stored on the client side. As long as the CID remains the same, the state can be recognized even if the user switches networks.

As mentioned in the Anycast architecture earlier, standard routers at Layer 3 use ECMP to decide routes based on the 4-tuple hash. When a user’s source IP changes, the same CID would typically be forwarded to a different server. However, by adding CID-based forwarding to the Unimog Layer 4 load balancer, Cloudflare enables users to switch networks without dropping their connection.

image source: https://zhuanlan.zhihu.com/p/311221111

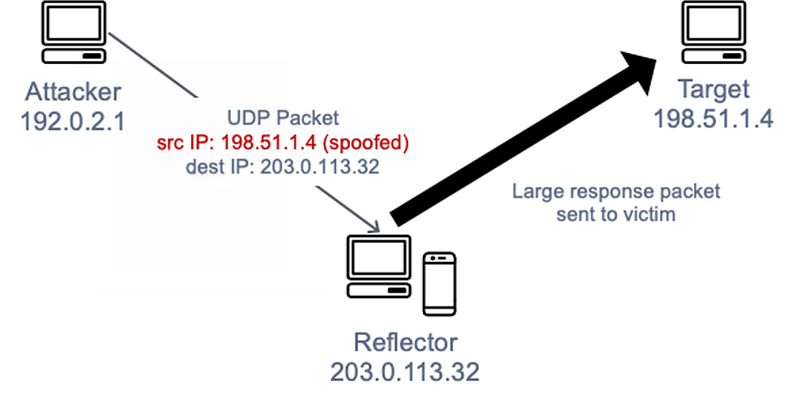

Challenge — Reflection Attacks

Since QUIC is built on UDP, it is susceptible to reflection attacks, a common UDP exploit. Hackers forge the victim’s IP and send massive requests to a server, causing the server to flood the victim’s network with large volumes of data.

image source: https://docs.aws.amazon.com/whitepapers/latest/aws-best-practices-ddos-resiliency/udp-reflection-attacks.html

For instance, QUIC’s TLS handshake requires sending certificates and public keys to the client—a significant amount of data. A hacker could repeatedly send TLS requests to force the server to bombard the victim with these large payloads.

TCP avoids this problem by using a small-data handshake; it only sends certificates after receiving a Client ACK. If a hacker forges an IP, they won’t receive the SYN-ACK and thus cannot return the Client ACK.

The fundamental solution for QUIC remains a handshake where the server sends an encrypted token to the client and requires it to be returned. However, this method sacrifices the 0-RTT feature. Consequently, Cloudflare is exploring alternatives like ECDSA certificates or certificate compression to reduce the payload size.

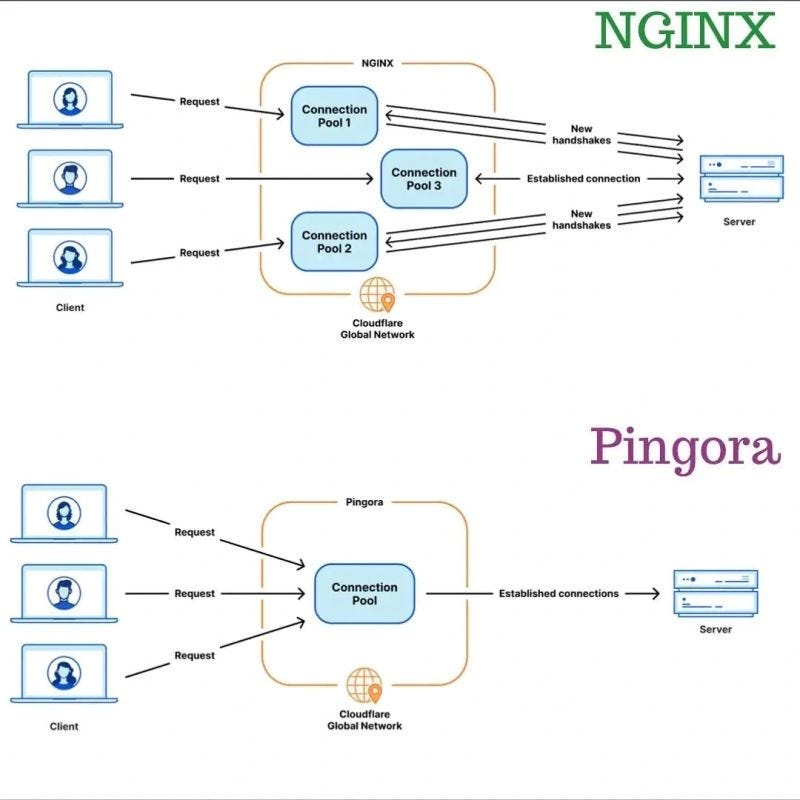

In addition to implementing QUIC in Rust, Cloudflare also developed its own proxy server, Pingora, to replace Nginx.

Why Did Cloudflare Replace Nginx with Pingora?

Nginx follows a process-based architecture, utilizing the SO_REUSEPORT socket system call to allow multiple processes to bind to the same port. The kernel then distributes sockets to these processes based on a hash of the 4-tuple: (src_ip, src_port, dest_ip, dest_port).

The downside of a process-based model is that implementing shared memory is complex and restrictive. For example, connections from the proxy to the origin server cannot be shared across processes. Furthermore, since Nginx is written in C, it carries inherent risks of memory leaks.

Cloudflare developed Pingora, a thread-based proxy written in Rust. While it retains an architecture similar to Nginx—with multiple kernel threads binding to the same port and each thread having its own independent epoll instance—it allows for the sharing of TCP connection information to origin servers across threads. Additionally, for scenarios involving exceptionally large payloads or file transfers, Pingora utilizes zero-copy techniques. This prevents the CPU overhead caused by copying massive amounts of data from kernel space to user space.

image source: https://www.linkedin.com/posts/pal-arnab_what-is-pingora-pingora-is-a-new-http-proxy-activity-7106102391397113856-B8rJ/

Moreover, Nginx carries significant developmental baggage. For instance, it often uses Lua scripts for feature extension, but Lua’s weak typing makes it difficult to maintain and results in lower performance. Nginx is also modularly built with many overlapping features; even unused modules can impact overall performance. Benchmark results show that Pingora delivers higher throughput, consumes less CPU, and offers greater stability compared to Nginx.

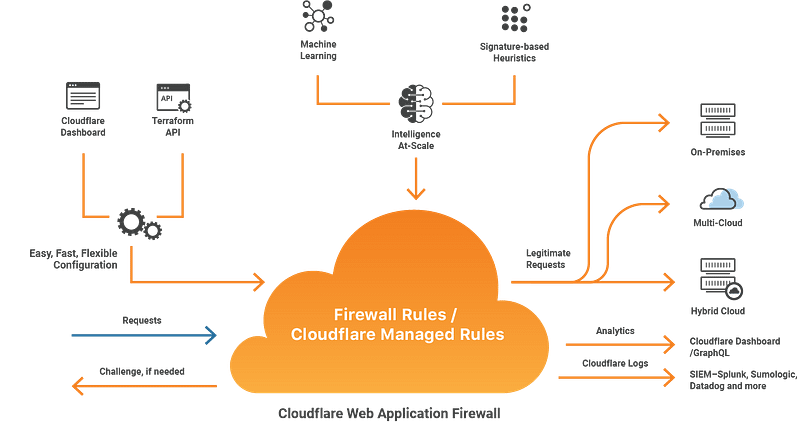

How Does Cloudflare Defend Against DDoS?

Anycast is inherently more resilient to DDoS attacks than Geo DNS. When a hacker resolves a domain to an IP and launches an attack, the requests are distributed to different locations via BGP, providing Layer 3 traffic shedding.

Other Layer 3 DDoS defense mechanisms include Unicast Reverse Path Forwarding (uRPF). In a DDoS attack, hackers often forge the source IP to avoid receiving server responses (and to hide their identity). Layer 3 checks the routing table to see if a valid path exists back to that source IP; if not, the source IP is considered forged, and the packet is dropped.

At Layer 4, SYN Flood is a common DDoS attack. During a TCP handshake, the client sends the first SYN packet. Upon receiving it, the server records the TCP state (source IP, port, initial sequence number, etc.) in the kernel and returns a SYN-ACK. A SYN flood sends a massive volume of SYN packets to exhaust the kernel’s memory by filling it with these states.

One solution for SYN floods is SYN Cookies. Instead of storing the state in memory, the server encodes the state into a number used as the initial sequence number in the SYN-ACK. When the client responds with a CLIENT-ACK (where seq = initial sequence number + 1), the server verifies the connection, decodes the state, and only then allocates memory for the connection.

Additionally, Cloudflare uses eBPF to insert hooks at the kernel level. These hooks monitor PPS (Packets Per Second) and BPS (Bits Per Second) to dynamically adjust Layer 4 rate-limiting strategies.

Finally, Layer 7 rate limiting is not merely rule-based. Through Bot Management, Cloudflare shares attack data across its global proxies. By analyzing behavioral patterns, if an attack is detected in the US, the relevant data is synchronized to Japan, allowing Japanese proxies to block the same bot preemptively.

image source: https://blog.cloudflare.com/cloudflare-bot-management-machine-learning-and-more/

Conclusion

What Cloudflare built is not just a CDN. It is a globally distributed system where routing policy, kernel behavior, transport protocols, and application logic are tightly coupled.

Once you see this, tools like eBPF stop being “advanced tricks.” They are no longer optional observability tools — they become inevitable building blocks.

After reading this article, Cloudflare’s incident reports will no longer feel mysterious. You’ll start recognizing the real constraints and trade-offs behind those failures.

References

https://blog.cloudflare.com/unimog-cloudflares-edge-load-balancer/

https://blog.cloudflare.com/the-road-to-quic/

https://blog.cloudflare.com/how-we-built-pingora-the-proxy-that-connects-cloudflare-to-the-internet/https://www.cloudflare.com/ru-ru/learning/dns/what-is-anycast-dns/

https://blog.cloudflare.com/async-quic-and-http-3-made-easy-tokio-quiche-is-now-open-source/

https://medium.com/codavel-blog/quic-vs-tcp-tls-and-why-quic-is-not-the-next-big-thing-d4ef59143efd