Stop Trying to Build the "Perfect" System: A Practical Guide to CAP Theorem.

Why every distributed system is a game of trade-offs, from etcd’s consistency to Cassandra’s availability.

Today, I’m going to talk about the famous CAP theorem in a practical way by answering the following questions:

What is a distributed system and why do people use it?

What is the CAP theorem?

How do you choose between designing a CP or an AP system?

What is a distributed system and why do people use it?

A distributed system consists of multiple independent machines that collaborate via communication protocols. The benefits of such a system are availability and flexibility.

When multiple machines work together to accomplish tasks, you don’t have to worry if one or two machines stop working unexpectedly — because the others continue operating.

Additionally, when the system encounters a sudden surge in throughput, you can simply add new machines without any downtime. In contrast, vertical scalability (upgrading a single machine) typically requires downtime and has physical limitations.

Although distributed systems offer critical advantages, designing a perfect distributed system is impractical.

That’s where the CAP theorem comes into play to guide architectural decisions.

What is the CAP theorem?

In a system with many machines, it’s unrealistic to expect everything to work flawlessly. Since machines communicate over networks, and network conditions are inherently unstable, routers may fail, bandwidth may become saturated, and packets may be delayed — making it impossible to assume timely delivery.

As a system architect, you must design for failure and build robustness into your system.

So what happens when some machines can’t communicate with each other?

In a distributed database, a machine crash or a lost network connection can lead to inconsistent data — because some machines may miss updates and fall out of sync. As a result, users accessing different servers may see different versions of the same data.

This is the moment when you have to make a trade-off:

If you prioritize data consistency, you must halt the system until the crashed machine recovers and synchronizes missing data.

If you prioritize availability, you allow the system to continue operating despite temporary inconsistencies.

This trade-off is at the heart of the CAP theorem:

Consistency and Availability cannot both be fully guaranteed when your distributed system must tolerate network partitions.

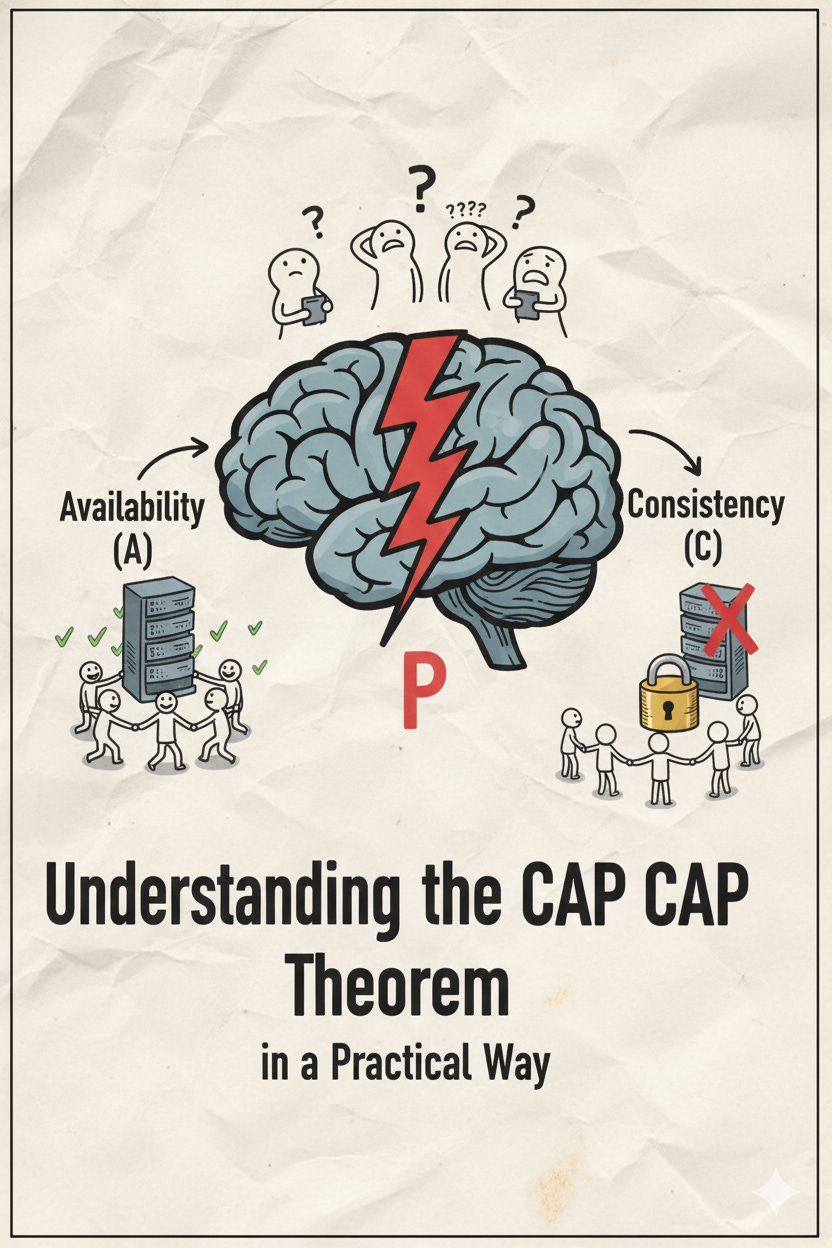

CAP stands for:

P — Partition Tolerance

A — Availability

C — Consistency

Every distributed system must make trade-offs among these three properties, typically sacrificing either consistency (C) or availability (A). Partition tolerance (P) cannot be sacrificed, because a distributed system must assume that partition failures will occur.

How to Choose Between CP and AP Systems?

Now that you understand the CAP theorem, let’s explore how to choose between CP and AP systems using database systems as examples.

When designing a database, users primarily care about read and write operations, and different business contexts may create higher loads on either reads or writes. Thus, your system should prioritize the more critical operation.

If your system handles heavy write throughput:

You will need multiple machines to handle write requests. However, with multiple writers, maintaining consistency becomes more challenging, because it’s difficult to reach consensus among multiple leaders.

Since read consistency is less critical in this scenario, temporary data inconsistencies can be tolerated. In this case, you should choose an AP system. The system prioritizes availability, ensuring that users can continue writing new data even when some machines are down.

Cassandra: An AP System Example

Cassandra is a distributed database optimized for high write throughput and is a classic example of an AP system.

It accepts writes on multiple nodes and propagates updates to replicas asynchronously. When conflicts occur, Cassandra typically resolves them using a timestamp-based Last-Write-Wins (LWW) rule, where writes with newer timestamps overwrite older ones.

As a result, occasional read inconsistencies can occur, but Cassandra prioritizes write availability and guarantees eventual consistency, allowing it to scale extremely well.

If your system prioritizes read consistency:

You may deploy several machines to handle reads and boost read performance, but only one machine should handle writes to avoid conflicts.

With a single source of truth for writes, you can ensure that every update is consistently synchronized across the system. However, if the single write node crashes, the system must elect a new write node — a process that causes temporary downtime, sacrificing availability. So, consistency is guaranteed, but availability is compromised during failures.

etcd: An CP System Example

etcd is a distributed key-value store primarily used for storing system metadata.

Because metadata is at the heart of system coordination — for example, Kubernetes uses etcd to store cluster state and scheduling information — data inconsistency can lead to inconsistent system behavior and unpredictable errors. Moreover, metadata is typically read very frequently but updated relatively infrequently.

To guarantee strong consistency, etcd uses the Raft consensus algorithm to ensure that every update is committed and replicated across the cluster before it becomes visible. It also provides multiple follower nodes to serve read requests, improving read performance while maintaining consistency.

Conclusion

The CAP theorem reminds us that no distributed system can simultaneously guarantee consistency, availability, and partition tolerance. When designing a system, it’s crucial to understand your business priorities, choose the right technologies, and make conscious trade-offs.